Midjourney Music: How To Extract Audio & MIDI From Pictures

- Ezra Sandzer-Bell

- Jan 25, 2024

- 4 min read

Updated: Oct 10, 2024

When MidJourney first came out in February 2022, it was the only major competitor to Dalle-2. Text to image workflows were still brand new and many of today's popular text to music apps had not reached the public yet. They were still quietly under development under the end of 2022 through middle of 2023.

Two years later, we find ourselves faced with Midjourney V6 and a variety of competing image creation services including Dalle-3, Leonardo AI, and countless others. AI song generators like Splash Music, Suno, Udio and Riffusion are all generating images to go along with their musical output.

Yet there's one remaining link that was yet to be solved until very recently. How exactly does one go about turning an image into music? The video below holds the answer to that question:

In January of 2024, we saw the first signs of gen AI companies turning images directly into audio, including both music and sound effects. The comparison video above show five examples of Midjourney images being converted to music.

We've found SoundGen to be the best service for converting AI images into accurate musical representations, while Audio-LDM2 seems to be the best for turning pictures into sound effects.

In this article we'll share a quick overview of Midjourney V6 so that you're up to date on the latest version. We'll also have a little fun and show you how to go on your very own MIDI-Journey with Midjourney, using audio-to-midi converters like RipX and Samplab.

Table of Contents

Quick Overview of Midjourney V6

Let's start with a few basics for those who aren't familiar with how the service works. Midjourney runs in a Discord server and paid users get access to a private chatbot, where they can issue special commands to retrieve imagery in any style.

The most straightforward command is /imagine, triggering a text area where users can describe exactly what they want to see. If you're curious to learn more about the kinds of prompts people are using in 2024, check out this new guide.

Midjourney V6 can be accessed by adding the flag "--v 6" to your text prompt. Doing so leads to higher image quality and better prompt coherence, meaning that you're more likely to get precisely what you asked for than in previous versions.

Of the four images that it creates, v6 will upscale at a rate that's measured to be two times faster than before. It's almost instantaneous. Text rendering improved dramatically as well, and it's possible to get stylized phrases by including the words in quotes.

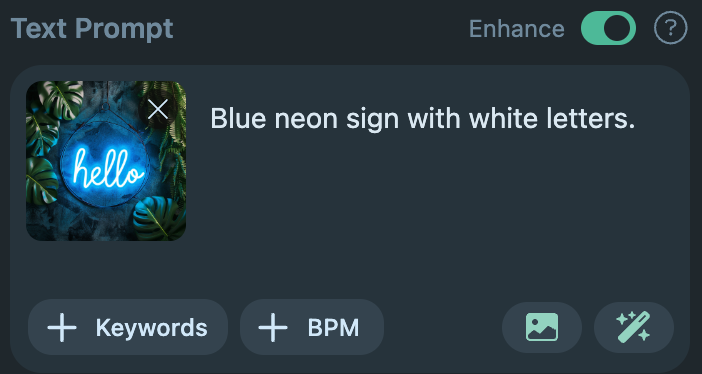

In the example above, we demonstrate what happens when you use a prompt like A neon blue sign that says "hello" --v 6. As you can see, the model successfully captured the look and feel of a modern, cursive neon sign and provided four variations to choose from. Here's what the fourth option looks like once it's been upscaled:

The results are great, but now the real question is how to go about turning your fancy neon blue sign into a song. We'll get into that next.

How to make Midjourney music

Creating music with Midjourney will require a simple modular approach. The AI image generator does not include an music model on its own, but it's easy to do with SoundGen's image-to-music feature.

To get started, sign up for free at SoundGen.io and log into your account. We've highlighted the picture icon in yellow below, so you know where to click. Pick a MIdjourney image that's saved on your local machine and SoundGen will automatically add captions.

I uploaded the blue neon sign and a moment later the image appeared along with a short caption describing the picture. This kind of image-to-text service is actually available in Midjourney as well, using their /describe prompt. But it's a bit easier and more fun to upload the picture directly to SoundGen.

At this point you can either hit generate to turn it into music, or you can expand on the prompt using the magic wand icon located to the right of the image icon. SoundGen will interpret the image and create musical parameters to pass into the AI music generation model. I recommend trying this process with and without the magic wand, across several pictures, to decide which approach you like more.

We'll keep the 15 second default duration for this demo, but you can expand it up to 30 seconds for the first creation. There's an additional expand feature in SoundGen that you can run repeatedly to make the track as long as you want it to be.

Just like that, we've created a piece of Midjourney music. As the screenshot above shows, you'll have the option to regenerate as many times as you like, using the expand button to build upon your favorite versions. There's a lot more to SoundGen, but in the interest of time I want to move on to another interesting phase of this journey.

MIDI Journey: Starting your Audio to MIDI side quest

Now that you've created a track in SoundGen, you can download it locally and run it through an audio to MIDI service like RipX or Samplab. Both services are great but I have personally been using RipX more often because it offers a broader feature set and higher quality MIDI editing capabilities.

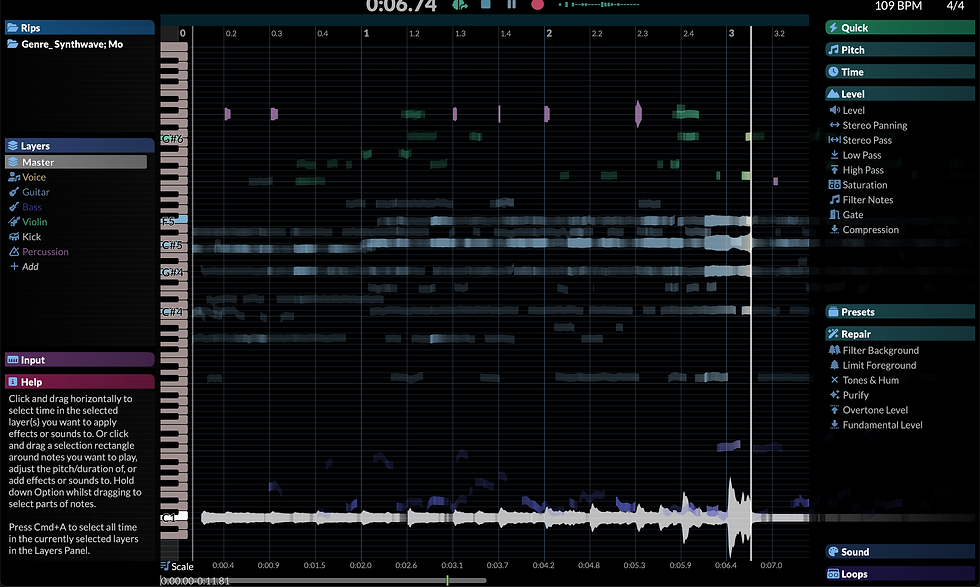

Here's what our blue neon sign Midjourney music looks like as a spectrogram in the RipX DAW. Stem separation has been performed automatically, removing troublesome percussion layers that don't necessarily translate to the MIDI notation format.

Tonal content, like the synths and lead melody, are correctly represented on the audio piano roll. You can move individual notes up and down, ala Melodyne 5, retaining the timbre of the original instruments. This gives you maximum control over the sound.

Any of the instrumental layers can be exported by RipX as MIDI or Audio files. Bring the MIDI files directly into your DAW to assign virtual instruments and make use of your plugin collection.

If the chain of text-to-image-to-audio-to-midi feels too modular, you can also try out AudioCipher. Our text-to-MIDI generator turns words into melodies and chord progressions in the key of your choice. Apply layers of rhythm automation and drag ideas from the plugin directly into your DAW.

That's the whole workflow in a nutshell. There's lots more to explore but we'll leave the rest up to your imagination and experimentation!